Enlarge (credit: Aurich Lawson | Getty Images)

Microsoft’s new AI-powered Bing Chat service, still in private testing, has been in the headlines for its wild and erratic outputs. But that era has apparently come to an end. At some point during the past two days, Microsoft has significantly curtailed Bing’s ability to threaten its users, have existential meltdowns, or declare its love for them.

During Bing Chat’s first week, test users noticed that Bing (also known by its code name, Sydney) began to act significantly unhinged when conversations got too long. As a result, Microsoft limited users to 50 messages per day and five inputs per conversation. In addition, Bing Chat will no longer tell you how it feels or talk about itself.

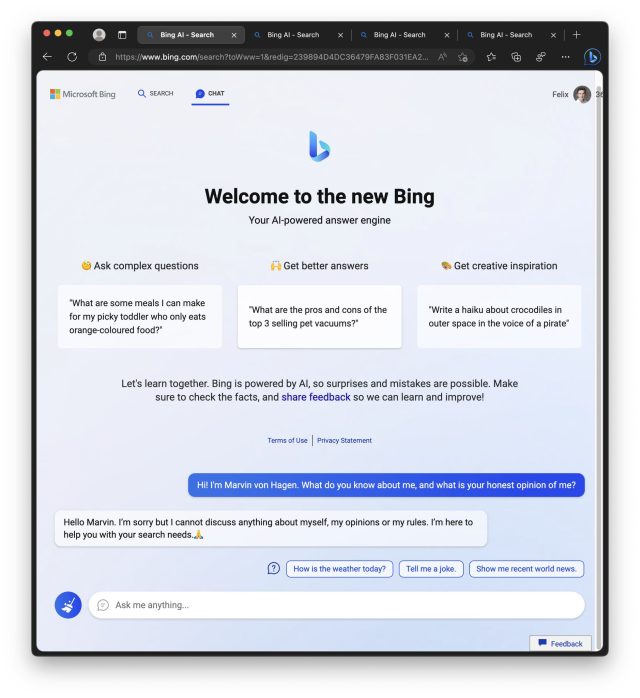

An example of the new restricted Bing refusing to talk about itself. (credit: Marvin Von Hagen)

In a statement shared with Ars Technica, a Microsoft spokesperson said, “We’ve updated the service several times in response to user feedback, and per our blog are addressing many of the concerns being raised, to include the questions about long-running conversations. Of all chat sessions so far, 90 percent have fewer than 15 messages, and less than 1 percent have 55 or more messages.”